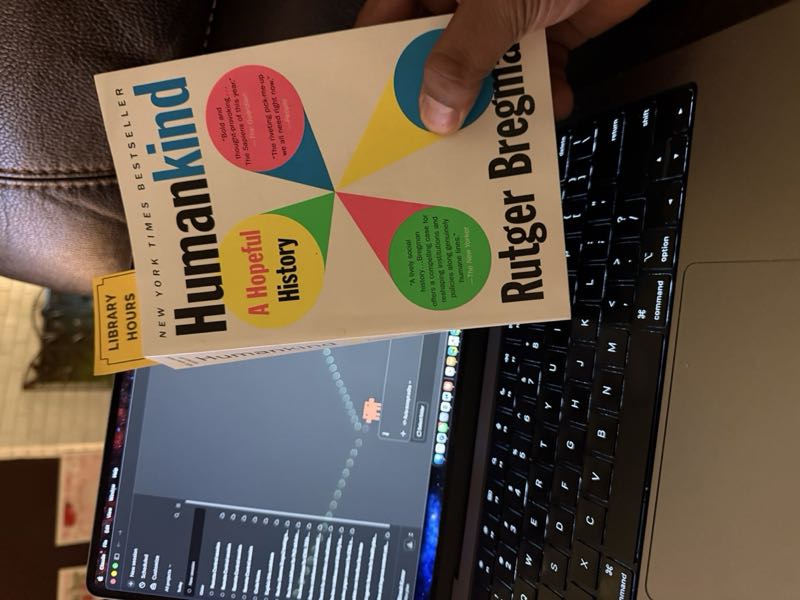

For the last few days, my best friend and I have been discussing AI and jobs. The question that keeps returning is simple but hard: is artificial intelligence just another tool, like earlier waves of computing, or is it something more disruptive, something that may start to replace human labor across sectors rather than simply support it? That question brought me back to Rutger Bregman’s Humankind: A Hopeful History, a book that feels surprisingly relevant to the future of work because it is really a book about what kind of creatures we think human beings are. (Bloomsbury Publishing)

Bregman’s central claim is that the modern world has been shaped by an unduly cynical view of human nature. We are often told that people are selfish, competitive, and only thinly civilized, and that social order depends on discipline from above. Against this, Humankind argues that it is not naive but realistic to begin from a more hopeful assumption: people are often decent, cooperative, and responsive to trust. Bregman revisits famous stories and studies that have long supported darker views of humanity and tries to show that many of them were misread, overstated, or built on weak foundations. Whether or not one accepts every part of his case, the book’s larger point is clear: the stories we tell about human nature shape the institutions we build.

This is one reason the book remains useful. It is not just a defense of optimism. It is an argument about design. If we assume that people are lazy or dangerous, we build systems of suspicion. If we assume they are capable of cooperation, we design institutions around trust, reciprocity, and shared responsibility. In that sense, Humankind is not simply about the past. It is about the moral assumptions hidden inside politics, economics, and public policy.

Some of the evidence Bregman leans toward also has empirical support outside the book itself. In the 2012 Nature paper “Spontaneous giving and calculated greed,” David Rand, Joshua Greene, and Martin Nowak found across a set of economic-game experiments that faster decisions tended to be more cooperative, while slower deliberation often reduced cooperation. Their interpretation was not that reflection is always bad, but that in ordinary social life cooperation can become intuitive because it is often adaptive. An even deeper developmental version of this idea appears in Felix Warneken and Michael Tomasello’s 2006 Science paper, which found that children as young as 18 months readily helped others achieve simple goals, even without reward. Taken together, these studies suggest that prosocial behavior is not always a fragile layer placed over selfish instinct. Sometimes it appears early and operates quickly. (Greater Good)

There are also moments in history where individual human judgment, against every institutional pressure, may have prevented the worst outcomes imaginable. In 1962, during the Cuban Missile Crisis, Soviet submarine officer Vasily Arkhipov refused to authorize a nuclear torpedo launch even as depth charges exploded around his vessel and his fellow officers urged him to fire. He was the only one of three senior officers who withheld consent, and that single refusal may have prevented a nuclear exchange. Two decades later, in 1983, Soviet lieutenant colonel Stanislav Petrov watched his early-warning system report incoming American missiles and chose not to escalate. He judged the alarm was false, reported it as a malfunction, and was right. As Veritasium’s account of these incidents makes vivid, in both cases a human being chose restraint over protocol, and the world continued. These are not stories about systems working as designed. They are stories about people overriding systems, trusting their own sense that something was wrong with the information in front of them. Bregman would recognize them immediately: evidence that under pressure, people can act from something better than fear.

That matters when we shift from human nature to artificial intelligence. Much of the public conversation about AI and employment is framed in technical language: productivity, efficiency, substitution, augmentation. But work is not only an economic variable. As a 2025 Scientific Reports paper by Jo-An Occhipinti and colleagues argues, work is also tied to identity, purpose, social integration, and mental health. Using a system-dynamics model with Australian data, the authors estimate that even a moderate increase in the AI-capital-to-labour ratio could double labour underutilisation by the mid 2050s, while also lowering disposable income and consumption. The exact numbers belong to one model and should not be treated as destiny, but the paper is valuable because it captures something many labor forecasts miss: the damage from displacement is existential as well as financial. (Nature)

This is exactly the point my friend and I have been circling. Earlier tools often created new work even as they destroyed older tasks. Computers changed offices, factories, and communication, but they also opened new industries and new kinds of employment. AI may still do that. But it may also be different in one important respect: it reaches into cognitive and professional work that many people once assumed was relatively secure. If that happens at scale, then societies will not just face a transition in skills. They will face a crisis of belonging. People do not only need wages. They need structure, usefulness, and some durable sense that their participation matters.

This is where Bregman becomes politically relevant. If we begin from the more hopeful anthropology that he defends, then the policy question around AI displacement looks different. Workers affected by automation are not failed individuals. They are citizens absorbing a transformation that markets alone did not morally justify. A thoughtful response would aim not only to preserve income, but also to protect dignity, participation, and meaningful forms of social membership.

That is why Humankind still matters in the age of AI. Its deepest argument is not that people are perfect. It is that a society built on suspicion will reproduce suspicion, while a society built on trust may call forth better forms of collective life. If AI causes serious job loss, the most important question may not be what the technology can do. It may be what we believe people are for.